AI Cheating Detectors Are Wrongly Accusing Students in 2026 | Cliptics

Last semester, a student at Yale School of Management was suspended. His crime? Writing exam answers that were too elaborate. A professor ran his work through an AI detector, it flagged it as machine generated, and the university acted. The student sued, alleging wrongful suspension and discrimination. He was a non-native English speaker.

This is not an isolated case. Across universities in the United States and beyond, students are being accused, punished, and sometimes expelled based on the output of AI detection tools that are demonstrably unreliable. The tools that were supposed to protect academic integrity are now actively undermining it.

The Numbers Tell a Damning Story

A landmark Stanford study by Liang et al. found that AI detectors misclassified 61.3% of TOEFL essays written by non-native English speakers as AI generated. Let that number sink in. More than six out of every ten essays written by real humans were flagged as fake. Meanwhile, the same detectors achieved near perfect accuracy on essays written by native English speakers.

A 2026 study evaluating commercial detectors on a balanced dataset of 192 texts found false positive rates ranging from 43% to 83% for authentic student writing. These are not edge cases. These are systemic failures baked into the technology itself.

The reason is straightforward. AI detectors measure things like perplexity, burstiness, and lexical diversity. Non-native speakers tend to use simpler vocabulary, shorter sentences, and more predictable structures. So do people with certain neurodivergent conditions. The detectors interpret this simplicity as a sign of machine generation, when it is actually just a different way of communicating in a second language.

International Students Bear the Heaviest Burden

The bias against non-native English speakers is not a minor flaw. It is a fundamental design problem.

International students already face enormous pressure. They are studying in a language that is not their first. They are navigating unfamiliar academic systems. Many are thousands of miles from home. And now they face a technology that treats their natural writing style as evidence of cheating.

In early 2025, a U.S. university saw dozens of international students accused of AI cheating based on Turnitin flags that were later proven to be false positives caused by machine learning biases in handling accented English. For students on visas, accusations of academic misconduct carry an additional threat that goes far beyond a failing grade. Universities routinely warn international students that misconduct charges can lead to suspension or expulsion, which would undermine their visa status. The fear of deportation becomes very real.

The irony is brutal. A student writes an essay in their own words, using their own knowledge, in a language they have worked years to learn. An algorithm decides their writing is too simple, too predictable, too uniform to be human. And a system built on the presumption of academic integrity presumes them guilty.

Neurodivergent Students Get Caught Too

The bias extends beyond language. Neurodivergent students report 3.2 times higher false positive rates, particularly those on the autism spectrum who naturally write in formal, structured patterns. Their clear, methodical prose looks suspicious to an algorithm trained to expect a certain kind of human messiness.

This creates an impossible situation. Students are being told, in effect, that their authentic writing is too organized, too logical, or too clean to be believed. The very qualities that represent their strengths as thinkers become liabilities in a system designed to catch machines.

The Arms Race Nobody Wins

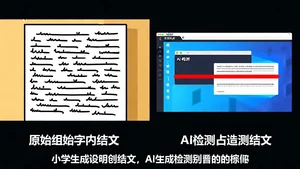

Faced with the constant threat of false accusations, students are increasingly turning to AI humanizer tools. Products like Humanize AI, Rewritify, and Ryne (which claims over 2.3 million student users) promise to make writing "undetectable" by AI checkers. Some students who never used AI to write their papers in the first place are running their own original work through humanizers, just to make sure it passes detection.

This is the absurd reality of 2026. Students who did not cheat are using AI tools to make their honest work look less like AI. The detection tools created the very problem they were supposed to solve.

Turnitin has responded by upgrading its detection to analyze sentence level entropy and semantic coherence patterns. GPTZero has released version 3 with similar improvements. But the fundamental dynamic remains the same. Detectors get better, humanizers get better, and students remain caught in the middle.

What Universities Are Getting Wrong

The core problem is not the technology. It is how universities use it.

Multiple studies, including guidance from the University of Kansas and MIT Sloan, have concluded that AI detector scores should not be used as standalone evidence in academic misconduct cases. The error rates are too high. The biases are too well documented. The consequences are too severe.

Yet many institutions continue to treat a Turnitin flag as a near certainty. Professors see a high AI probability score and move straight to disciplinary action, skipping the nuanced investigation that fairness demands.

OpenAI itself shut down its own AI detector in 2023 because of low accuracy. Quill.org and CommonLit discontinued their AI Writing Check tools, stating that generative AI has become too sophisticated for reliable detection. When the companies building the AI are telling you their own detection does not work, that should mean something.

What Actually Works Instead

Some institutions are leading the way toward better approaches. Vanderbilt University paused its use of AI detectors over equity concerns. Other schools have introduced manual review processes, student appeal systems, and bias audits of detection tools.

The most effective strategies focus on process rather than policing. Oral examinations where students explain and defend their work. Iterative assignments where professors can see the development of ideas over time. In class writing samples that establish a baseline for each student's style. Portfolio based assessments that demonstrate sustained engagement with material.

These methods require more effort from educators. They are harder to scale. But they do not carry the risk of destroying a student's academic career based on a statistical guess.

What Students Can Do Right Now

If you are a student who has been falsely accused, know that you have options. Keep all your drafts, notes, browser history, and research materials. Document your writing process. Several students have successfully fought false accusations by showing their revision history in Google Docs or Word.

Legal challenges are mounting. An Adelphi University student filed suit over AI essay allegations. A University of Minnesota PhD candidate was expelled over an AI claim and took legal action. These cases are establishing precedent that will shape how universities handle AI detection going forward.

If your school is using AI detectors as the primary evidence for misconduct charges, push back. Ask what the tool's documented false positive rate is. Ask whether it has been tested for bias against non-native speakers. Ask what the appeals process looks like. Institutions that cannot answer these questions have no business using these tools to determine a student's future.

The Bigger Picture

We are watching a technology that was deployed too quickly, tested too little, and trusted too much reshape the relationship between students and universities. AI cheating detectors were built on a reasonable premise: academic integrity matters. But the execution has been careless, and the people paying the price are overwhelmingly those who were already most vulnerable.

The solution is not better detectors. It is a fundamental rethinking of how we verify learning. Until that happens, every student submitting an essay faces a quiet lottery where the odds are stacked against those who write differently, think differently, or simply come from somewhere else.

That is not academic integrity. That is institutional failure.