AI Hallucinations: Why AI Makes Stuff Up | Cliptics

You've probably had this happen. You ask an AI chatbot something pretty straightforward, maybe a historical date, a recipe, or some coding help, and it answers with absolute confidence. Sounds great. Looks right. Then you double check and realize it just made the whole thing up.

Welcome to AI hallucinations. It's 2026, and they're still happening. A lot.

I've been digging into why this keeps happening, what the major AI companies are doing about it, and most importantly what you can actually do to protect yourself from getting fooled. Because let's be honest, getting confidently wrong information from a machine that sounds smarter than your college professor is kind of unsettling.

What Exactly Is an AI Hallucination?

Let's keep this simple. An AI hallucination is when a language model generates information that sounds plausible but is completely fabricated. It's not lying, because lying implies intent. The AI doesn't know it's wrong. It doesn't know anything at all, really. It's pattern matching on steroids.

Think of it like a really confident friend who always has an answer, even when they have no idea what they're talking about. You ask them about quantum physics and they give you a five minute explanation that sounds amazing but is roughly forty percent nonsense. That's an AI hallucination.

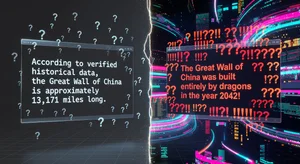

The tricky part? These aren't obvious errors. The AI doesn't say "I'm not sure about this." It delivers fabricated facts with the same confident tone it uses for verified information. Fake citations that look real. Invented statistics that sound reasonable. Historical events that never happened but feel like they should have.

Why Does This Keep Happening in 2026?

Here's the thing that surprises most people. AI hallucinations aren't a bug that companies forgot to fix. They're a fundamental consequence of how large language models work.

These models, whether we're talking about OpenAI's GPT series, Google's Gemini, Anthropic's Claude, or any of the others, work by predicting the next most likely token in a sequence. They're not looking things up in a database. They're not reasoning from first principles. They're generating text that statistically fits the pattern of what should come next.

And that's the root of the problem. Statistical likelihood and factual accuracy are not the same thing. Sometimes the most statistically likely next word or phrase is wrong. The model has seen millions of examples of confident, well-structured text, so it produces confident, well-structured text, regardless of whether the content is accurate.

There are a few specific situations where hallucinations get worse. Rare or niche topics where the training data is thin. Recent events that happened after the training cutoff. Anything involving precise numbers, dates, or citations. And complex reasoning chains where small errors compound into completely wrong conclusions.

Microsoft, Google, and OpenAI have all published research in the last year acknowledging that hallucination rates, while improving, remain a significant challenge. Anthropic's recent technical reports show that even with retrieval augmented generation and other grounding techniques, no model has achieved zero hallucination rates on standard benchmarks.

The Real World Damage

This isn't just an academic problem. People are making decisions based on AI generated information every day.

Lawyers have cited fake court cases generated by AI in actual legal filings. Students have submitted papers with fabricated sources. Medical information generated by chatbots has included dosage recommendations that were dangerously wrong. Businesses have made strategic decisions based on AI generated market analysis that turned out to be fiction.

And here's what makes this particularly dangerous in 2026. AI tools are now deeply embedded in workflows everywhere. They're drafting emails, writing reports, generating code, creating marketing copy, answering customer questions. The sheer volume of AI generated content means the opportunities for hallucinated information to spread are enormous.

The scary part isn't that AI sometimes gets things wrong. Humans get things wrong too. The scary part is the scale and the confidence. When a human isn't sure, they usually hedge. They say "I think" or "maybe." AI models don't naturally do that. They present everything with equal authority, whether it's a well established scientific fact or something they just made up on the spot.

What the Big Companies Are Doing

To be fair, AI companies aren't ignoring this problem. There's been real progress, even if the problem isn't solved.

OpenAI has invested heavily in reinforcement learning from human feedback to reduce hallucinations in their latest models. Google's Gemini includes built-in fact checking against their search index, which helps but doesn't eliminate the issue. Anthropic has focused on what they call constitutional AI approaches, training models to be more honest about uncertainty. Perplexity has built their entire product around citing sources for every claim, which doesn't prevent hallucinations but makes them easier to catch.

Retrieval augmented generation, or RAG, has become the standard approach. Instead of relying solely on what the model learned during training, RAG systems pull in real time information from external sources before generating a response. It helps significantly with factual queries, but it introduces its own problems. What if the retrieved sources are wrong? What if the model misinterprets the retrieved information?

There's also been interesting work on uncertainty quantification. Some newer models can assign confidence scores to their outputs, essentially saying "I'm ninety percent sure about this but only forty percent sure about that." It's promising, but it's not widely deployed yet in consumer facing tools.

What You Can Actually Do About It

Okay, here's the practical part. Because knowing why AI hallucinates is interesting, but knowing how to deal with it is useful.

Never trust a single source. This is the big one. If an AI tells you something important, verify it independently. Check the actual source. Google it. Look it up in a reference you trust. This takes thirty seconds and can save you from embarrassment or worse.

Watch for specifics. AI hallucinations often involve very specific details like exact dates, precise statistics, named studies, or direct quotes. If an AI gives you a very specific claim, that's exactly the kind of thing you should verify. Paradoxically, the more specific and impressive a fact sounds, the more likely it might be fabricated.

Ask the AI to show its work. Many modern AI systems can provide citations or explain their reasoning. Ask them to. If a model claims a study showed something, ask for the study name and authors. If it can't provide them, or if the ones it provides don't check out, that's your red flag.

Learn the weak spots. AI is much more likely to hallucinate about niche topics, very recent events, numerical data, and anything requiring multi-step reasoning. If your question falls into one of these categories, apply extra scrutiny.

Use specialized tools for critical tasks. For medical, legal, financial, or safety critical information, don't rely on general purpose chatbots. Use domain specific tools that have been validated for accuracy in those fields. A general AI assistant is great for brainstorming, but it's not a doctor, lawyer, or financial advisor.

Pay attention to phrasing. If an AI starts a response with something like "Based on my training data" or provides extremely confident answers to questions that should have nuanced responses, be cautious. Real expertise usually comes with caveats and context. Fake confidence sounds smooth but lacks depth.

Where This Goes From Here

The honest truth? AI hallucinations aren't going away anytime soon. They'll get less frequent, yes. The models will improve. The guardrails will get better. But as long as language models work by predicting probable text rather than reasoning from verified knowledge, there will always be some level of risk.

What's changing is our relationship with AI output. We're moving from a phase where people treated AI like an oracle to a phase where people treat it more like a very smart but sometimes unreliable colleague. You wouldn't blindly trust everything a coworker told you without checking, right? Same principle applies here.

The companies building these tools have a responsibility to make hallucinations less frequent and more detectable. But we as users have a responsibility too. Critical thinking didn't stop being important just because we got fancy new technology. If anything, it's more important now than ever.

So the next time an AI confidently tells you something that sounds a little too good, a little too specific, or a little too clean, take a beat. Check it. Because in 2026, the most valuable skill isn't knowing how to use AI. It's knowing when not to trust it.