Generative AI Image Creation in 2026: Flux vs Midjourney | Cliptics

Let's skip the preamble. You want to know which AI image generator is worth your time in 2026 and which ones are overhyped. Here is the honest answer, broken down by what each model actually does well versus what the marketing says it does.

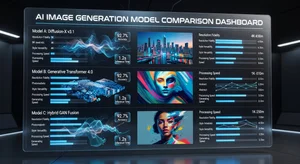

The three names you keep seeing are Flux, Midjourney, and Stable Diffusion. They're not equal alternatives. They serve different use cases, have genuinely different strengths, and make different tradeoffs. Picking the wrong one for your workflow costs you time and money. Picking the right one is genuinely useful.

Flux: The Prompt-Following Model

Flux has earned its reputation as the most reliable prompt follower of the current generation. If you write a detailed prompt, Flux does what you asked. Specific object placement, specific lighting conditions, specific text rendered inside the image: these are areas where Flux consistently outperforms its competition.

The reason is architectural. Flux was built with prompt adherence as a core design goal, and that shows in the outputs. When you ask for a red cup on the left side of a wooden table with warm afternoon light coming through a window, you get that. Not an interpretation of that. Not a creative riff on that. The actual thing you described.

For commercial work, this is enormous. Brand guidelines require consistency. Campaigns require specific compositions. Marketing teams have been burned repeatedly by image models that produce beautiful images that have nothing to do with the brief. Flux breaks that pattern.

The tradeoff is that Flux's aesthetic sensibility is relatively neutral. It produces technically accurate images that don't have a strong visual personality. If you're looking for a model to surprise you with something you didn't know you wanted, Flux is not your best option. If you need reliable execution of a specific vision, it is the current leader.

Flux-dev and Flux-pro are both strong. Flux-2-pro, available through platforms like Cliptics, offers a significant quality jump for photorealistic outputs specifically.

Midjourney: The Aesthetic Model

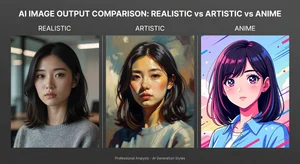

Midjourney's output is immediately recognizable. There is a specific quality to it, a certain way of handling light and texture and compositional drama, that has become influential enough that other models are measured against it. Midjourney made AI image generation look like art rather than a technical demo, and that shift matters more than it might seem.

The strength here is emotional impact. Midjourney images feel intentional in a way that is difficult to quantify but easy to recognize. Characters have weight. Environments have mood. The images do not merely describe what you asked for but seem to interpret it with visual intelligence.

The weaknesses are equally distinctive. Midjourney's prompt following is inconsistent. Highly specific compositional requests are frequently ignored in favor of what the model thinks looks better. Text rendering has improved but remains unreliable compared to Flux. Hands and fine anatomical details have gotten better but still trip up on complex poses.

The Discord-based interface remains a genuine friction point for professional workflows. The web interface has improved but the core interaction model still feels designed for exploration rather than production. If your workflow requires precise iteration and consistent repeatability, Midjourney's creative autonomy becomes a liability.

For concept exploration, mood boarding, and creative direction work where the goal is inspiring a direction rather than executing a specific vision, Midjourney is still the strongest choice in 2026. Artists and art directors use it heavily in early stages of projects precisely because it generates unexpected directions worth pursuing.

Stable Diffusion: The Control Model

Stable Diffusion is not a single model. It is an space of open-source models, fine-tunes, ControlNet extensions, and community-built tools that collectively offer more control over output than any closed system.

That distinction matters. When people say Stable Diffusion, they usually mean one of several things: the base SDXL or SD 3.5 models, a specific fine-tune trained on a particular aesthetic, a ControlNet workflow that constrains generation using pose or depth or edge maps, or some combination of all three.

The raw quality of base Stable Diffusion models is competitive but not best-in-class for general photorealistic outputs. Where the space wins is customization. If you need a model trained specifically on architectural renders, product photography, anime illustration, or any other specialized domain, the Stable Diffusion community has almost certainly built and shared one.

ControlNet workflows remain in a category of their own for precise spatial control. The ability to use a pose skeleton, a depth map, or an edge detection image to constrain how a scene is composed gives Stable Diffusion users a level of deterministic control that Flux and Midjourney cannot match. For workflows that require generating consistent character positions across multiple images, or maintaining specific spatial relationships between objects, this remains the professional standard.

The cost of this power is complexity. Running Stable Diffusion workflows well requires technical comfort that Midjourney and Flux don't demand. Local installation with a capable GPU, or using a service with the right fine-tune loaded, requires setup time and ongoing maintenance. This is not a complaint, it is an honest description of the tradeoff.

Practical Comparisons That Actually Matter

Text rendering: Flux leads clearly. DALL-E 3 (the OpenAI model not detailed here but relevant) is competitive. Midjourney and standard Stable Diffusion base models remain behind.

Consistent characters across images: Stable Diffusion ControlNet workflows lead for precise consistency. Midjourney's character reference feature has improved significantly. Flux is reliable but offers fewer consistency controls.

Speed: Midjourney and Flux through API services are fast. Local Stable Diffusion speed depends entirely on your hardware. Cloud Stable Diffusion services have narrowed the gap.

Cost at scale: Open-source Stable Diffusion running locally has zero per-generation cost. API-based Flux and Midjourney pricing varies by plan and usage volume. At high volume, local Stable Diffusion often wins economically.

Photorealism: Flux and fine-tuned Stable Diffusion models lead for realistic photography. Midjourney's photorealism is strong but has a recognizable aesthetic quality that can reveal AI origin to trained eyes.

Artistic styles: Midjourney leads for general artistic impact. Stable Diffusion fine-tunes dominate specific artistic domains. Flux can execute requested styles accurately but without spontaneous creative interpretation.

The Prompt Engineering Difference

One factor that changes everything: how well you write prompts. The performance gap between models narrows significantly when the prompt writer is skilled. Midjourney's best results require understanding which style references and technical terms it responds to. Flux rewards specificity. Stable Diffusion fine-tunes require knowing what the model was trained on.

Tools that help bridge this gap have genuine value. A prompt enhancer that expands a basic concept into a detailed, model-appropriate prompt can dramatically improve outputs from any of these systems, particularly for users who are still developing their prompt engineering instincts.

This is not a knock on beginners. Prompt writing is a learned skill that takes time, and the gap between a basic prompt and an optimized one produces visibly different results across all three models.

What the 2026 Landscape Actually Looks Like

The clean winner narrative that technology coverage often reaches for does not apply here. These models have genuinely differentiated into different tools for different purposes, and the differentiation is sharpening rather than blurring.

Midjourney is investing in its aesthetic identity and creative autonomy. Its recent updates have focused on character consistency and style control, extending what it does well rather than trying to compete on prompt precision.

Flux development has continued to push on prompt adherence and commercial use cases, with improved inpainting and editing capabilities that make it more useful for iterative production work.

Stable Diffusion 3.5 and community models built on it have focused on capability improvements that benefit power users: better anatomy, improved text, faster inference, and continued growth of the fine-tune space.

The practical recommendation for 2026 is not to pick one and commit. It is to understand which tool solves which problem and move between them. Most professional digital artists and marketing teams working at scale use more than one. The switching cost between them, especially through platforms that provide unified access, is low enough that specialization makes more sense than loyalty.

If you are starting out and can only learn one, the decision depends on your primary use case. Commercial work with specific requirements points toward Flux. Creative exploration and concept development points toward Midjourney. Technical workflows requiring precise control point toward Stable Diffusion.

If you are experienced and building a production workflow, you probably already know you need more than one. The question is which combinations to invest in, and that depends on the specific bottlenecks in your current process.

The models keep improving. The performance gaps that exist now will look different in six months. What will not change is the basic principle: different tools are built for different purposes, and using the right tool for the job produces better results than any amount of loyalty to a single platform.

The Pricing Reality in 2026

One factor that influences tool choice more than most comparisons acknowledge is pricing structure and what it means for your actual usage patterns.

Midjourney's subscription model works well for users who generate moderate volumes of images regularly. The monthly subscription costs are predictable and the unlimited or high-volume generation included at upper tiers is genuinely useful for active creative workflows. Where it becomes expensive is for occasional users who need high volume in bursts, since the subscription model does not scale well for uneven usage.

Flux through API-based access has more variable pricing that can be favorable at high volumes when using efficient generation parameters. The per-generation cost model means you pay for what you use, which aligns well with professional workflows where generation happens in focused production sessions rather than continuous exploration.

Stable Diffusion's pricing advantage for heavy users running local hardware remains real. The compute cost of running your own hardware is real but bounded, and after the initial equipment investment the marginal cost of additional generation approaches zero. For users generating at very high volumes, the economics of local Stable Diffusion are difficult to match with any subscription model.

The hidden cost that all pricing comparisons tend to undercount is iteration time. A model that produces closer-to-correct results on the first generation is cheaper in practice than a cheaper model that requires more iterations to reach an acceptable output, even if the per-generation cost is lower. This particularly favors Flux for commercial applications where the brief is specific and revision cycles are expensive.