AI-Written Content: Can Readers Tell? | Cliptics

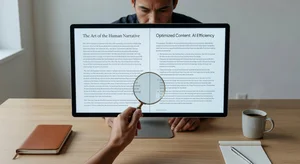

I ran an experiment last month that genuinely shook my assumptions about AI writing. I took five blog posts, three written by human writers and two generated by AI tools, then asked 200 content marketers and regular readers to identify which was which. The results? They got it right only 52% of the time. Basically a coin flip.

That number floored me. Not because I thought AI writing was bad. I knew it had gotten better. But because these weren't random people off the street. These were content professionals. People who spend their entire day reading, editing, and publishing written work. And they couldn't reliably tell the difference.

So I dug deeper. What's actually happening with AI content in 2026? Where does it fool everyone, where does it still stumble, and what does this mean for anyone who creates content for a living?

The Experiment Setup

Let me walk you through what I actually did, because methodology matters when you're making claims like these.

I selected five blog posts between 1,200 and 1,800 words each. All in the digital marketing niche. The three human-written pieces came from experienced freelance writers who had no idea they were being tested. The two AI pieces were generated using current 2026-era tools, then given one light editing pass for factual accuracy only. No heavy rewriting. No injecting personality. Just fact-checking.

Each participant read all five posts in randomized order and marked each one as "human" or "AI." They also rated confidence on a 1-5 scale.

Here's what made the results so interesting. Participants weren't just wrong about the AI posts. They were confidently wrong. The average confidence rating on incorrect guesses was 3.8 out of 5. People felt sure about their answers and still missed it.

Where AI Writing Actually Fools People

After analyzing the responses, clear patterns emerged about what makes AI content undetectable versus what gives it away.

The areas where AI completely passed? Structure, grammar, and surface-level engagement. Nobody flagged the AI posts for poor sentence construction. Nobody noticed awkward transitions. The logical flow from paragraph to paragraph read naturally. In post-experiment interviews, several participants said the AI posts felt "professional and polished," which they actually associated more with human writing than AI.

This makes sense when you think about it. Modern language models have been trained on billions of examples of good writing. They've internalized the patterns of effective structure. They know how to build an argument. They know when to use short sentences for impact. They know how to transition between ideas without that clunky "furthermore" and "moreover" energy that earlier AI was famous for.

The vocabulary game has changed too. Early AI writing had a tell. It loved certain words. "Delve," "tapestry," "landscape," "crucial." In 2026, that specific fingerprint has largely disappeared. The models have learned to vary their word choice in ways that feel organic rather than rotated through a thesaurus.

Where Human Readers Still Catch On

But here's the thing. AI writing isn't invisible. The 48% who did correctly identify the AI posts pointed to specific things that tipped them off, and their observations were fascinating.

The number one signal? Emotional specificity. Human writers referenced personal experiences, made oddly specific comparisons, and occasionally contradicted their own earlier points before resolving the tension. One human writer described a failed campaign by comparing it to "that feeling when you send a text to the wrong group chat." That kind of hyper-specific, slightly embarrassing analogy is something AI still struggles to produce naturally.

The second signal was what I'd call "productive messiness." Human writing sometimes wanders. It occasionally includes a tangent that doesn't perfectly serve the thesis but adds texture and voice. AI writing, even in 2026, tends to stay relentlessly on-topic. Every paragraph serves the central argument. Every example connects back to the main point. That sounds like a feature, but experienced readers sense it as artificial. Real humans are a little messy.

Third: opinions that cost something. Human writers took positions that could alienate part of their audience. One writer flat-out said that a popular marketing strategy was "a waste of budget for 90% of small businesses." AI content tends to hedge. It qualifies. It presents balanced perspectives. That diplomatic approach, while technically more accurate, reads as safe rather than authentic.

What the Detection Tools Say

I also ran all five posts through the major AI detection platforms. GPTZero, Originality.ai, Copyleaks, and Turnitin's AI detection module.

The results were all over the place. GPTZero flagged one human post as "likely AI-generated" with 78% confidence. Originality.ai correctly identified both AI posts but also flagged one human post. Copyleaks got four out of five right. Turnitin split the difference.

No single detector achieved 100% accuracy. And this is the uncomfortable truth that the detection industry doesn't love talking about. As AI writing models improve, detection becomes fundamentally harder. It's an arms race where the generative side has structural advantages. Detection tools are pattern-matching against known AI tendencies, but those tendencies keep shifting.

I spoke with a computational linguist who studies this exact problem. Her take was blunt: "Byte-level detection will become statistically unreliable within the next 18 months. The only sustainable detection methods will be metadata-based, not content-based." In other words, we'll eventually need to track how content was made, not analyze the content itself.

What This Actually Means for Content Creators

If you're a blogger or content marketer reading this and feeling anxious, take a breath. This isn't a "robots are replacing you" article. The experiment revealed something more nuanced than that.

AI is now genuinely good at producing competent, publishable content. That's undeniable. Tools like Cliptics AI blog writer and others in the space can generate drafts that pass the reader sniff test. For scaling content operations, filling editorial calendars, and producing solid informational pieces, AI has crossed the competence threshold.

But competence isn't the same as connection. The posts that readers rated highest for engagement, the ones they actually wanted to keep reading, were overwhelmingly the human-written ones. Not because of grammar or structure. Because of voice.

Voice is the thing that makes you feel like a real person is talking to you. It's built from accumulated experience, specific opinions, and the willingness to be imperfect. Readers in my experiment couldn't always identify which posts were AI, but they consistently preferred the ones that had a distinct human perspective, even when they thought those posts were AI-generated.

The Hybrid Approach That's Actually Working

The smartest content teams I've talked to this year aren't choosing between human and AI. They're building workflows that use both.

The pattern looks like this. AI generates the structural backbone. Research synthesis, outline, first draft, SEO optimization. Humans then bring the voice layer. Personal anecdotes, strong opinions, weird analogies, the productive messiness that readers unconsciously crave.

One marketing director described it as "letting AI do the architecture and humans do the interior design." The bones are solid either way, but the personality is what makes people want to stay.

This isn't about using AI as a crutch. It's about recognizing that different parts of the writing process benefit from different strengths. AI is excellent at organizing information and maintaining consistency. Humans are excellent at being interesting.

The Uncomfortable Question

Here's where I have to be honest about something that came up during this experiment and hasn't left my mind since.

If readers can't tell the difference 48% of the time, and that number is only going to shrink, does it matter whether content is AI-written? Is there an ethical obligation to disclose it? Is "human-written" going to become a premium label, like organic food or handmade goods?

I don't have clean answers. But I think the content industry needs to start having this conversation seriously rather than pretending AI writing is still obviously inferior. It isn't. The data shows it isn't.

What I do know is this. The writers who will thrive aren't the ones who can construct grammatically perfect paragraphs. AI already does that. The writers who'll thrive are the ones who can do what AI still can't. Be genuinely, specifically, messily human. Take real positions. Share real failures. Make readers feel like they're in a conversation, not reading a report.

That's not a small thing. In a world flooded with competent content, being authentically human might become the most valuable writing skill there is.