"Claude Code: Why It's the #1 AI Coding Tool in 2026 | Cliptics"

I switched to Claude Code about four months ago. Not because I wanted to. Because a colleague wouldn't stop talking about it.

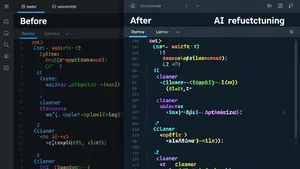

He kept saying things like "it just understands the whole codebase" and "I haven't written a boilerplate function in weeks." I nodded politely, kept using my existing setup, and moved on. Then one Friday afternoon I was stuck on a particularly nasty refactoring job across six interconnected files. I figured I'd give Claude Code ten minutes to prove itself or fail.

Three hours later, the refactor was done. Clean. Tested. Properly documented. And I was staring at my terminal wondering what just happened.

What Actually Makes It Different

The numbers tell one story. Claude Code, powered by Anthropic's Opus 4.6 model, scored 80.8% on SWE-bench Verified. That's a benchmark that tests whether AI can actually solve real software engineering problems pulled from GitHub issues. For context, most tools hover around 60 to 70%. Scoring above 80% means the model can handle genuinely complex, multi-file engineering tasks that would take a human developer hours.

But benchmarks only matter so much. What changed my mind was the context window.

Opus 4.6 can hold 1 million tokens in a single conversation. That's roughly the equivalent of an entire mid-sized codebase. You don't need to carefully select which files to feed it. You don't need retrieval pipelines or clever chunking strategies. You point it at your project and it just reads everything. The imports, the tests, the config files, the database schemas. All of it.

This sounds like a small thing until you've experienced what it means in practice. When I ask Claude Code to add a new API endpoint, it already knows what my existing endpoints look like. It follows the same patterns, uses the same error handling conventions, and even picks up on naming styles I've been using throughout the project. No other tool I've used does this as naturally.

The Honest Comparison

I've spent real time with all three major options this year, so here's what I actually found.

Cursor is fast. Genuinely fast. It's an AI-native IDE, and autocomplete feels instant. For day-to-day editing, writing new functions, fixing small bugs, adding quick features, Cursor is hard to beat. It has over a million users for good reason.

GitHub Copilot is the most accessible. It works inside VS Code, JetBrains, Neovim, and practically every editor developers already use. At $10 a month, the price is right. For most developers doing standard work, Copilot handles 80% of what they need without any learning curve.

Claude Code is different because it works in the terminal. Not inside an IDE. Not through a plugin. You open your terminal, point it at your project, and talk to it like a senior engineer sitting next to you. It reads your entire repo, understands the architecture, and then executes multi-step tasks across multiple files without losing track of what it's doing.

The interesting pattern I've noticed among experienced developers is that nobody picks just one tool anymore. The most common stack is Cursor or Copilot for everyday editing combined with Claude Code for the hard stuff. Complex refactors, debugging tricky race conditions, writing test suites for legacy code, architectural decisions that touch dozens of files.

Surveys from early 2026 back this up. Professional developers now use an average of 2.3 AI coding tools. Claude Code sits at 41% adoption among professionals, slightly ahead of Copilot at 38%. But the "most loved" rating tells a clearer story: Claude Code at 46%, Cursor at 19%, Copilot at 9%.

Where It Shines (And Where It Doesn't)

Let me be direct about when Claude Code is worth it and when it isn't.

It excels at:

- Refactoring across many files while keeping everything consistent

- Writing comprehensive test suites that actually cover edge cases

- Debugging issues that span multiple services or modules

- Code review that catches architectural problems, not just lint errors

- Understanding unfamiliar codebases quickly when you join a new project

It struggles with:

- Quick inline suggestions while you type (Cursor and Copilot are better here)

- Price sensitivity. Claude Max runs $100 to $200 per month, which is steep compared to Copilot's $10

- The terminal-only interface isn't for everyone. If you live in your IDE and never touch the command line, there's a learning curve

The price question is real. At $100 to $200 a month for Claude Max, you need to be doing enough complex work to justify the cost. For a solo developer building side projects, Copilot is probably the smarter financial choice. For a professional working on production systems where bugs cost real money, Claude Code pays for itself faster than you'd think.

How I Actually Use It Day to Day

My workflow now looks like this. I open VS Code with Copilot for writing new code. Quick edits, small features, boilerplate. Then when I hit something complex, I switch to Claude Code in my terminal.

Last week I needed to migrate a payment processing system from one API to another. Twelve files, three services, dozens of edge cases around currency conversion and error handling. I gave Claude Code access to the entire repo, described what I needed, and watched it work through each file methodically. It caught two edge cases I hadn't even considered, one around timezone handling in subscription renewals and another around retry logic when the new API returns a different error format.

That's the kind of work that would have taken me a full day. Claude Code finished it in about an hour, and the code was cleaner than what I would have written.

Companies like Netflix, Spotify, and Salesforce are already using Claude Code in their workflows. That's not marketing fluff. When engineering teams at that scale adopt a tool, it means something about reliability and capability.

The Bottom Line

Here's my honest take after four months of daily use. Claude Code isn't the best AI coding tool for everyone. But it's the best AI coding tool for hard problems.

If you write code professionally, if you deal with complex systems, if you've ever spent an entire afternoon tracking down a bug that lived across three files and two services, Claude Code will change how you work. The 1M token context window and Opus 4.6's reasoning capabilities aren't marketing features. They're practical advantages that show up every single day.

95% of professional developers now use AI tools at least weekly. The question isn't whether to use AI for coding anymore. It's which tool matches the kind of work you do. For complex, multi-file, architecturally aware coding assistance, nothing I've used comes close to Claude Code.

Try the AI tools on Cliptics to explore what else is possible with AI in your creative and development workflows. And if you're already using Claude Code, I'd love to hear how your experience compares.